基于Transformers的自然语言处理(NLP)入门(五)

本文为参加Datawhale组队学习时所写,如若需了解细致内容,请去到Datawhale官方开源课程基于transformers的自然语言处理(NLP)入门 (datawhalechina.github.io)

抽取式问答任务

抽取式问答任务:给定一个问题和一段文本,从这段文本中找出能够回答该问题的文本片段(span), 通过使用Tranier API和dataset包,我们可以轻松加载数据,然后微调transformers。

1 | |

加载数据集

1 | |

datasets的属性结构

1 | |

无论是训练集、验证集还是测试集,对于每一个问答数据样本都会有“context", "question"和“answers”三个key。

1 | |

1 | |

answers除了给出了文本片段里的答案文本之外,还给出了该answer所在位置(以character开始计算,上面的例子是第515位)。

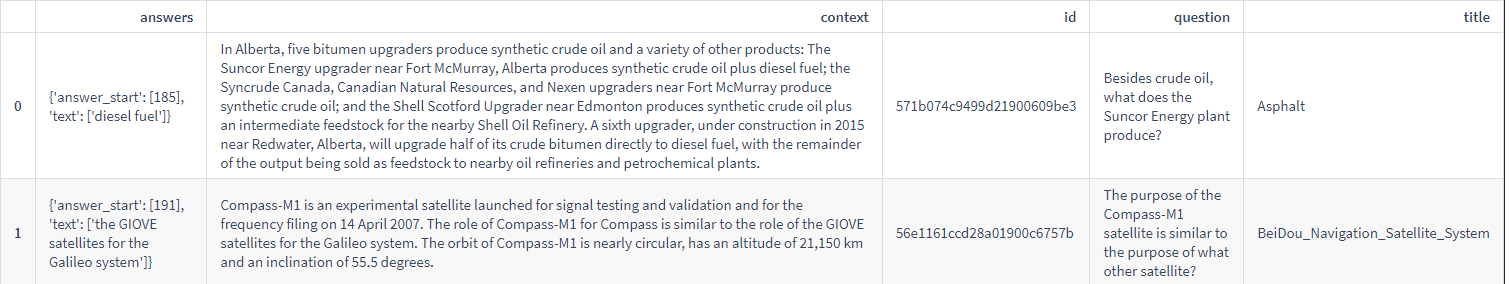

随机抽取数据,进行展示。

数据预处理

1 | |

现在我们还需要思考预训练机器问答模型们是如何处理非常长的文本的。一般来说预训练模型输入有最大长度要求,所以我们通常将超长的输入进行截断。但是,如果我们将问答数据三元组<question, context, answer>中的超长context截断,那么我们可能丢掉答案(因为我们是从context中抽取出一个小片段作为答案)。

为了解决这个问题,下面的代码找到一个超过长度的例子,然后向您演示如何进行处理。我们把超长的输入切片为多个较短的输入,每个输入都要满足模型最大长度输入要求。由于答案可能存在与切片的地方,因此我们需要允许相邻切片之间有交集,代码中通过doc_stride参数控制。

机器问答预训练模型通常将question和context拼接之后作为输入,然后让模型从context里寻找答案。

1 | |

for循环遍历数据集,寻找一个超长样本,本notebook例子模型所要求的最大输入是384(经常使用的还有512)

1 | |

如果不截断的话,那么输入的长度是396

1 | |

现在如果我们截断成最大长度384,将会丢失超长部分的信息

注意,一般来说,我们只对context进行切片,不会对问题进行切片,由于context是拼接在question后面的,对应着第2个文本,所以使用only_second控制.tokenizer使用doc_stride控制切片之间的重合长度。

1 | |

由于对超长输入进行了切片,我们得到了多个输入,这些输入input_ids对应的长度是

1 | |

我们可以将预处理后的token IDs,input_ids还原为文本格式:

1 | |

1 | |

由于我们对超长文本进行了切片,我们需要重新寻找答案所在位置(相对于每一片context开头的相对位置)。机器问答模型将使用答案的位置(答案的起始位置和结束位置,start和end)作为训练标签(而不是答案的token

IDS)。所以切片需要和原始输入有一个对应关系,每个token在切片后context的位置和原始超长context里位置的对应关系。在tokenizer里可以使用return_offsets_mapping参数得到这个对应关系的map:

1 | |

1 | |

上面打印的是tokenized_example第0片的前100个tokens在原始context片里的位置。注意第一个token是[CLS]设定为(0,

0)是因为这个token不属于qeustion或者answer的一部分。第2个token对应的起始和结束位置是0和3。我们可以根据切片后的token

id转化对应的token;然后使用offset_mapping参数映射回切片前的token位置,找到原始位置的tokens。由于question拼接在context前面,所以直接从question里根据下标找就行了。

1 | |

1 | |

因此,我们得到了切片前后的位置对应关系。我们还需要使用sequence_ids参数来区分question和context。

1 | |

1 | |

None对应了special

tokens,然后0或者1分表代表第1个文本和第2个文本,由于我们qeustin第1个传入,context第2个传入,所以分别对应question和context。最终我们可以找到标注的答案在预处理之后的features里的位置:

1 | |

1 | |

我们需要对答案的位置进行验证,验证方式是:使用答案所在位置下标,取到对应的token ID,然后转化为文本,然后和原始答案进行但对比。

1 | |

1 | |

有时候question拼接context,而有时候是context拼接question,不同的模型有不同的要求,因此我们需要使用padding_side参数来指定。

1 | |

现在,把所有步骤合并到一起。对于context中无答案的情况,我们直接将标注的答案起始位置和结束位置放置在CLS的下标处。如果allow_impossible_answers这个参数是False的化,那这些无答案的样本都会被扔掉。现在,把所有步骤合并到一起。对于context中无答案的情况,我们直接将标注的答案起始位置和结束位置放置在CLS的下标处。如果allow_impossible_answers这个参数是False的化,那这些无答案的样本都会被扔掉。

1 | |

Fine-tuning微调模型

1 | |

训练参数

1 | |

1 | |

1 | |

Evaluation评估

我们需要将模型的输出后处理成我们需要的文本格式。模型本身预测的是answer所在start/end位置的logits。如果我们评估时喂入模型的是一个batch,那么输出如下:

1 | |

模型的输出是一个像dict的数据结构,包含了loss(因为提供了label,所有有loss),answer start和end的logits。

1 | |

每个feature里的每个token都会有一个logit。预测answer最简单的方法就是选择start的logits里最大的下标最为answer其实位置,end的logits里最大下标作为answer的结束位置。

以上策略大部分情况下都是不错的。但是,如果我们的输入告诉我们找不到答案:比如start的位置比end的位置下标大,或者start和end的位置指向了question。

这个时候,简单的方法是我们继续需要选择第2好的预测作为我们的答案了,实在不行看第3好的预测,以此类推。

由于上面的方法不太容易找到可行的答案,我们需要思考更合理的方法。我们将start和end的logits相加得到新的打分,然后去看最好的n_best_size个start和end对。从n_best_size个start和end对里推出相应的答案,然后检查答案是否有效,最后将他们按照打分进行怕苦,选择得分最高的作为答案。由于上面的方法不太容易找到可行的答案,我们需要思考更合理的方法。我们将start和end的logits相加得到新的打分,然后去看最好的n_best_size个start和end对。从n_best_size个start和end对里推出相应的答案,然后检查答案是否有效,最后将他们按照打分进行怕苦,选择得分最高的作为答案。

1 | |

随后我们对根据score对valid_answers进行排序,找到最好的那一个。最后还剩一步是:检查start和end位置对应的文本是否在context里面而不是在question里面。

为了完成这件事情,我们需要添加以下两个信息到validation的features里面:

- 产生feature的example的ID。由于每个example可能会产生多个feature,所以每个feature/切片的feature需要知道他们对应的example。

- offset mapping: 将每个切片的tokens的位置映射会原始文本基于character的下标位置。

1 | |

1 | |

使用Trainer.predict方法获得所有预测结果

1 | |

这个 Trainer 隐藏了

一些模型训练时候没有使用的属性(这里是

example_id和offset_mapping,后处理的时候会用到),所以我们需要把这些设置回来:

1 | |

当一个token位置对应着question部分时候,prepare_validation_features函数将offset

mappings设定为None,所以我们根据offset

mapping很容易可以鉴定token是否在context里面啦。我们同样也根绝扔掉了特别长的答案。

1 | |

将预测答案和真实答案进行比较即可:

1 | |

由于第1个feature一定是来自于第1个example,所以相对容易。对于其他的fearures来说,我们需要一个features和examples的一个映射map。同样,由于一个example可能被切片成多个features,所以我们也需要将所有features里的答案全部联系起来。以下的代码就将exmaple的下标和features的下标进行map映射。

1 | |

最后一点事情是如何解决无答案的情况(squad_v2=True的时候)。以上的代码都只考虑了context里面的asnwers,所以我们同样需要将无答案的预测得分进行搜集(无答案的预测对应的CLSt oken的start和end)。如果一个example样本又多个features,那么我们还需要在多个features里预测是不是都无答案。所以无答案的最终得分是所有features的无答案得分最小的那个。

只要无答案的最终得分高于其他所有答案的得分,那么该问题就是无答案。

1 | |

将后处理函数应用到原始预测上

1 | |

加载评价指标

1 | |

1 | |